Pairwise 3D registration evaluation: methodology, datasets and results

Even though the literature on pairwise registration is mature and provides a great number of remarkable works, surprisingly, they lack adequate experimental evaluation. Such condition of things might be due to the lack of an acknowledged benchmark, let alone standard methodologies, on which to ground the evaluation process. As a result, we found it exceedingly difficult, if not impossible, to shed light on which coarse registration pipelines are most effective and under which conditions. The work in [1] addresses this issue by proposing a benchmark that compares 4 state-of-the-art methods on 24 datasets acquired by different sensors (Kinect V1, Space Time Stereo and different types of laser scanners).

Methodology

Given a dataset made out of M views, the benchmark considers all the N = M x (M-1) / 2 possible view pairs {VI,VJ}, and, for each of them, attempts to estimate the rigid motion RT(VJ) that aligns VJ to VI by means of the coarse registration algorithm under evaluation. Then, Generalized ICP [7] is applied to the pair {VI,RT(VJ)}, and the resulting view ICP(VJ) is compared to GT(VJ), the latter being the view obtained by transforming VJ according to the known ground truth rigid motion which aligns VJ to VI. In particular, if the Root Mean Square Error (RMSE) between ICP(VJ) and GT(VJ) is lower than 5 times the mesh resolution, VI and VJ are judged as correctly registered by the algorithm under evaluation, otherwise a registration failure is recorded. In the event of successful registration, the RMSE between RT(VJ) and GT(VJ) is also recorded for the purpose of estimating the accuracy of the algorithm.

The C++ framework that performs the evaluation can be downloaded from here.

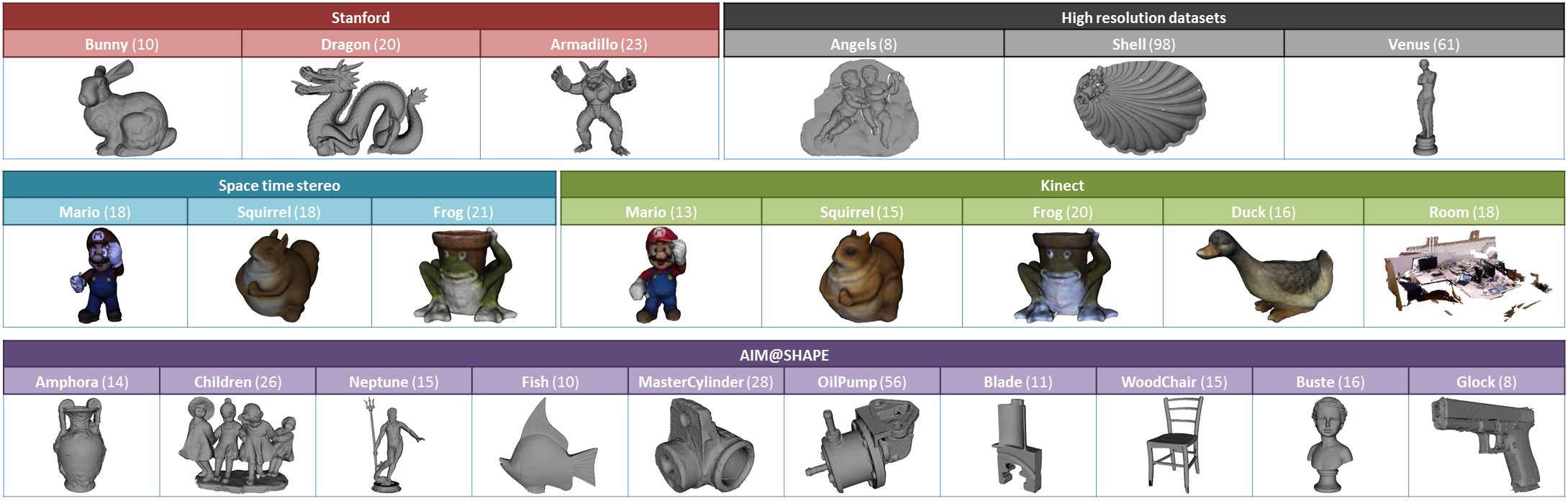

Datasets

The benchmark is performed on 24 datasets acquired by different sensors (Kinect V1, Space Time Stereo and different types of laser scanners).

For each dataset, a ZIP archive contains the set of partial views in PLY format and a groundTruth.txt file with the 4x4 matrices representing the rigid motions that align the partial view against the full registered model.

When the range maps are available, the ZIP archive also contains a set of text files storing the lattice and the (x, y, z) coordinates of the range maps. The files are formatted as follows:

N x M matrix storing the y coordinates

N x M matrix storing the z coordinates

Stanford 3D Scanning Repository:

The datasets are provided courtesy of their owners by the AIM@SHAPE Shape Repository and are distributed under the AIM@SHAPE license.

- Glock (8 views - 7 MB - owner: UU)

- Fish (10 views - 8 MB - owners: IMATI, CNR)

- Amphora (14 views - 13 MB - owners: CNR, IMATI)

- Neptune (15 views - 22 MB - owners: IMATI, INRIA)

- Buste (16 views - 3 MB - owner: UU)

- Dancing Children (26 views - 36 MB - owner: INRIA)

- MasterCylinder (28 views - 12 MB - owner: INRIA)

- OilPump (56 views - 72 MB - owner: INRIA)

- Blade (11 views - 7 MB - owners: IMATI, CNR)

- WoodChair (15 views - 9 MB - owners: IMATI, CNR)

Space time stereo:

The datasets have been acquired by a Space Time Stereo setup [5,6] and are released under the Creative Commons 3.0 Attribution License (CC-BY 3.0).

- FrogStereo (21 views - 28 MB)

- MarioStereo (18 views - 22 MB)

- SquirrelStereo (18 views - 16 MB)

Kinect:

The datasets have been acquired by a Kinect V1 sensor and are released under the Creative Commons 3.0 Attribution License (CC-BY 3.0). The Room dataset has been built by sampling the fr1/room sequence from the RGB-D SLAM Benchmark every 10 frames so as to obtain a dataset comprising 18 partial views. As the ground-truth provided by the RGB-D SLAM Benchmark is not sufficiently reliable for the evaluation of pairwise registration task, we performed a further fine registration step so as to obtain more precise rigid motions between pair of views. Accordingly, whereas for all the other datasets we provide the M rigid motions that align the M views to a unique reference frame, in the case of the Room dataset, we provide the N = M x (M-1) / 2 rigid motions that align each view pair. Such exception is seamlessly handled by the framework.

- FrogKinect (20 views - 14 MB)

- MarioKinect (13 views - 4 MB)

- SquirrelKinect (15 views - 4 MB)

- DuckKinect (16 views - 10 MB)

- Room (18 views - 65 MB)

High resolution datasets:

- Angels (8 views - 234 MB)

- Shell (98 views - 1.15 GB) Due to a confidentiality agreement we cannot provide this dataset.

- Venus (61 views - 1.39 GB)

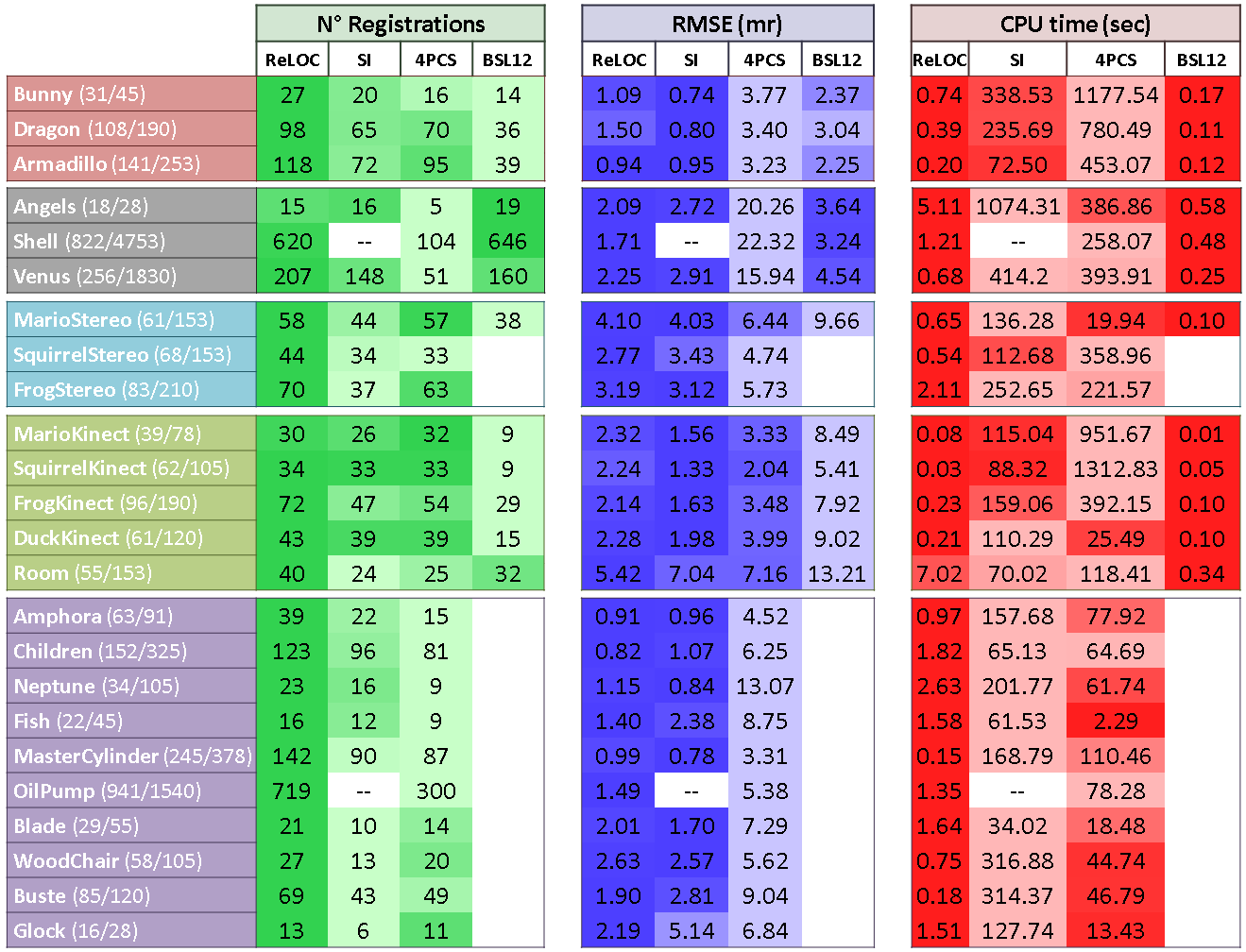

Experimental Results

The table compares the performance of the method proposed in [1], referred to here as ReLOC, to those yielded by a pipeline based on Spin Image descriptor (SI) [4], the 4-points congruent sets algorithm (4PCS) [2] and the method proposed in [3] (BSL12).

For each dataset and algorithm the following three measurements are collected:

- N° Registrations: number of correctly aligned view pairs.

- RMSE: average accuracy (i.e. RMSE) across all correctly registered view pairs.

- CPU Time: average execution time to compute the rigid motion to align a view pair (regardless the outcome being either success or failure).

The adopted color code (the darker the better for all the three indexes) helps to catch, at a glance, the relative performance of the algorithms.

The experimental results are also summarized in this excel spreadsheet.

References

| [1] | Petrelli A., Di Stefano L., "Pairwise registration by local orientation cues", Computer Graphics Forum, 2015. [PDF] |

| [2] | Aiger D., Mitra N. J., Cohen-or D. "4-points congruent sets for robust surface registration" ACM Transactions on Graphics 27, 3 (2008). |

| [3] | Bonarrigo F., Signoroni A., Leonardi R. "Multiview alignment with database of features for an improved usage of high-end 3D scanners" Journal on Advances in Signal Processing (EURASIP), 1 (2012). |

| [4] | Johnson A. E., Hebert M. "Surface registration by matching oriented points" International Conference on 3D Digital Imaging and Modeling (1997). |

| [5] | Davis J., Nehab D., Ramamoorthi R., Rusinkiewicz S. "Spacetime stereo: A unifying framework for depth from triangulation" Transactions on Pattern Analysis and Machine Intelligence. 27, 2 (2005), 296–302. |

| [6] | Zhang L., Curless B., Seitz S. M. "Spacetime stereo: Shape recovery for dynamic scenes" Conference on Computer Vision and Pattern Recognition (2003). |

| [7] | Segal A., Haehnel D., Thrun S. "Generalized-ICP" Robotics: Science and Systems (2009). |

© 2015 The Authors Computer Graphics Forum © 2015 The Eurographics Association and John Wiley & Sons Ltd.